Note

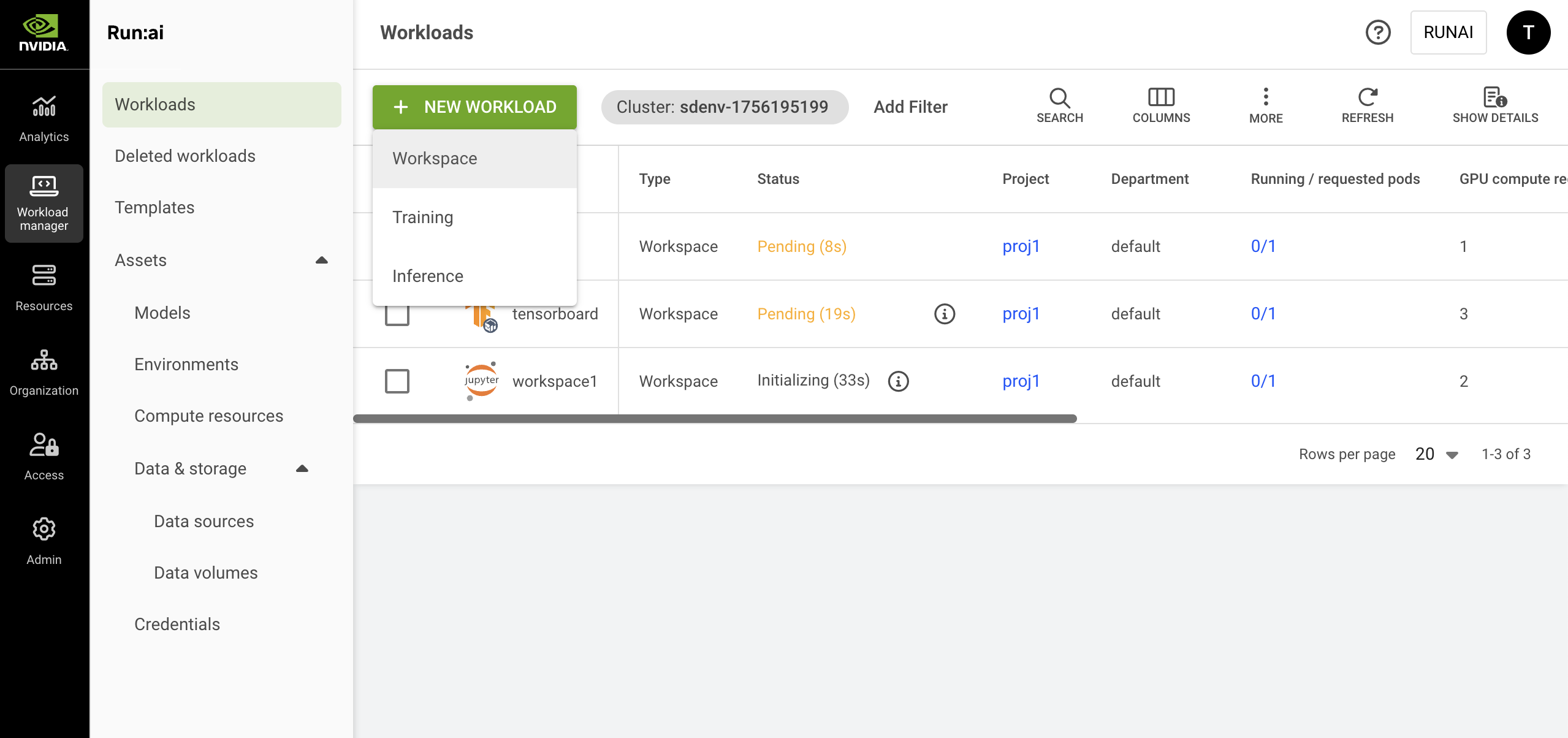

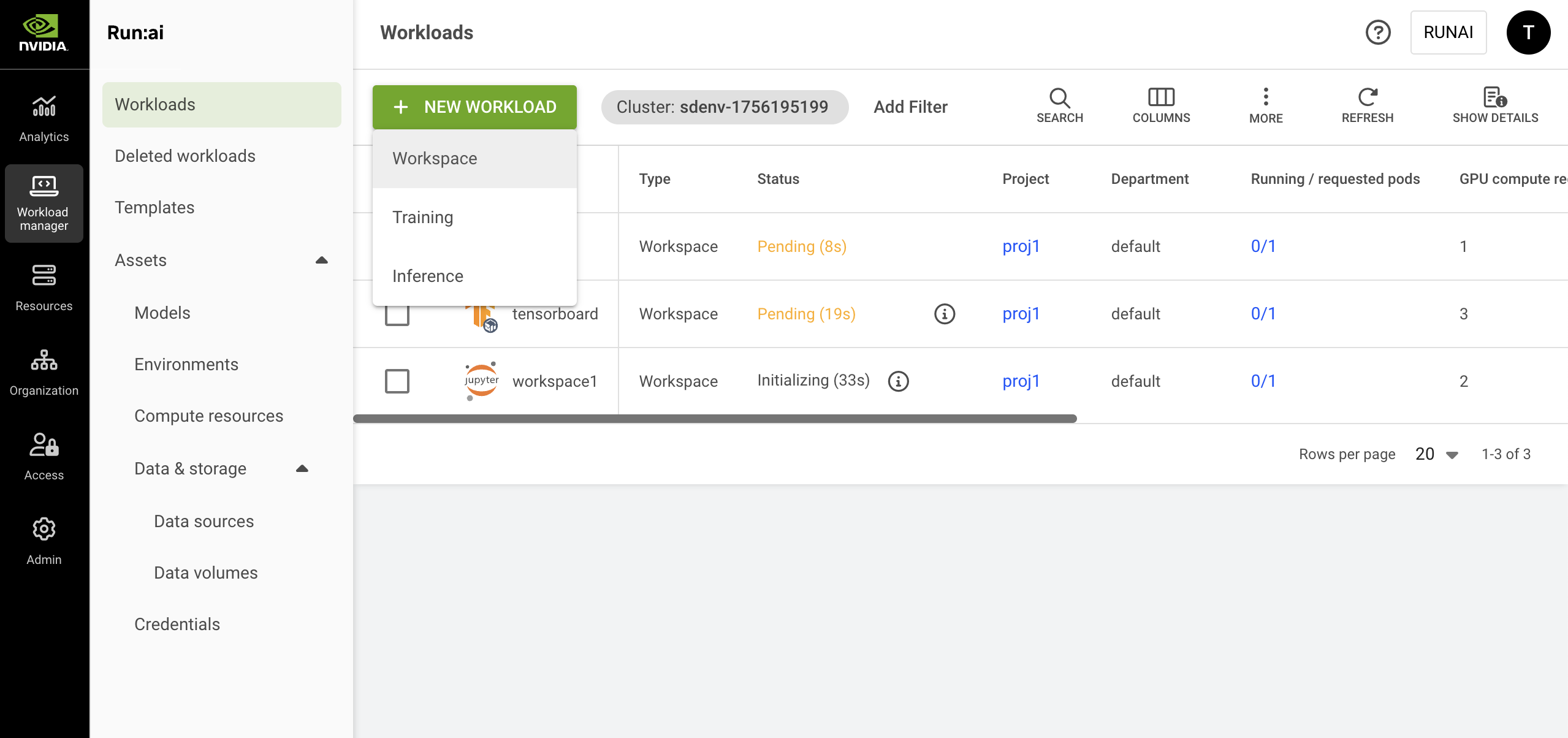

You cannot create a workspace directly from a template if the template does not include all mandatory fields or if an organizational policy restricts the use of certain fields within that template.